Master model evaluation with our 2025 guide. Learn essential metrics, fairness assessment, robustness testing, and business alignment strategies to measure what truly matters in your ML projects.

Introduction: The Paradigm Shift in Model Evaluation

In the rapidly evolving landscape of artificial intelligence and machine learning, Model Evaluation has emerged as the critical discipline that separates successful AI implementations from costly failures. As we progress through 2025, the field of Model Evaluation has undergone a fundamental transformation—moving beyond simple accuracy metrics to a comprehensive framework that assesses real-world impact, ethical considerations, and business value. This evolution reflects the growing understanding that a model’s performance on historical data means little if it cannot deliver tangible value while operating responsibly in production environments.

The contemporary approach to Model Evaluation represents a fundamental shift from technical validation to comprehensive assessment. Where earlier practices focused primarily on statistical measures and optimization metrics, modern evaluation encompasses the entire ecosystem in which models operate. This includes not only traditional performance metrics but also fairness assessments, robustness testing, business impact analysis, and continuous monitoring frameworks. The stakes have never been higher—according to recent industry analysis, organizations that implement comprehensive Model Evaluation frameworks experience significantly higher ROI from their AI initiatives and dramatically reduce production incidents. These improvements stem from catching issues early, making better deployment decisions, and ensuring models continue to perform as expected in dynamic real-world conditions.

The transformation of Model Evaluation practices in 2025 reflects several converging trends. The increasing complexity of models, particularly with the rise of large language models and sophisticated neural architectures, demands more nuanced evaluation approaches. Simultaneously, growing regulatory scrutiny and public awareness about AI ethics have made fairness and transparency non-negotiable components of model assessment. Furthermore, the recognition that models exist to serve business objectives has shifted focus from purely technical metrics to business-impact measurements. This guide synthesizes these developments into a comprehensive framework for Model Evaluation that addresses the challenges and opportunities of the current AI landscape.

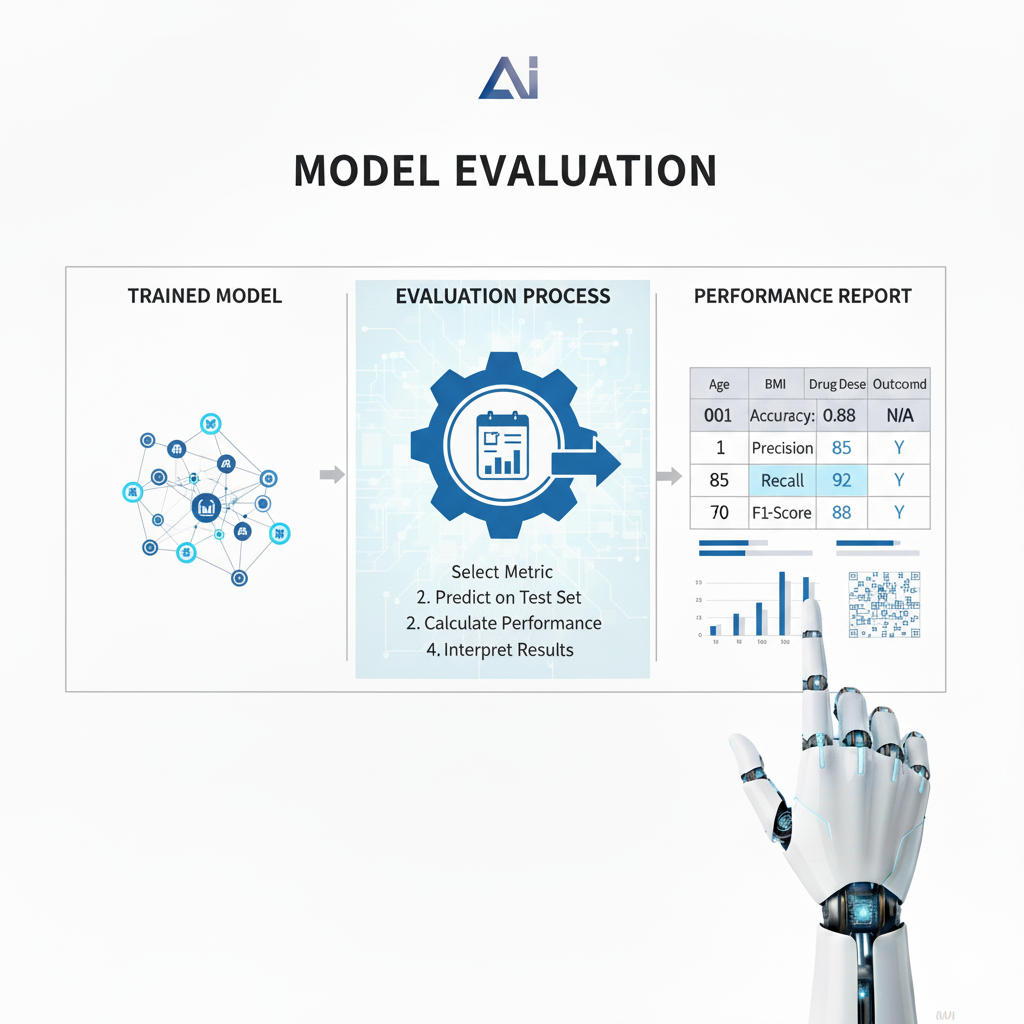

Part 1: The Foundation of Model Evaluation

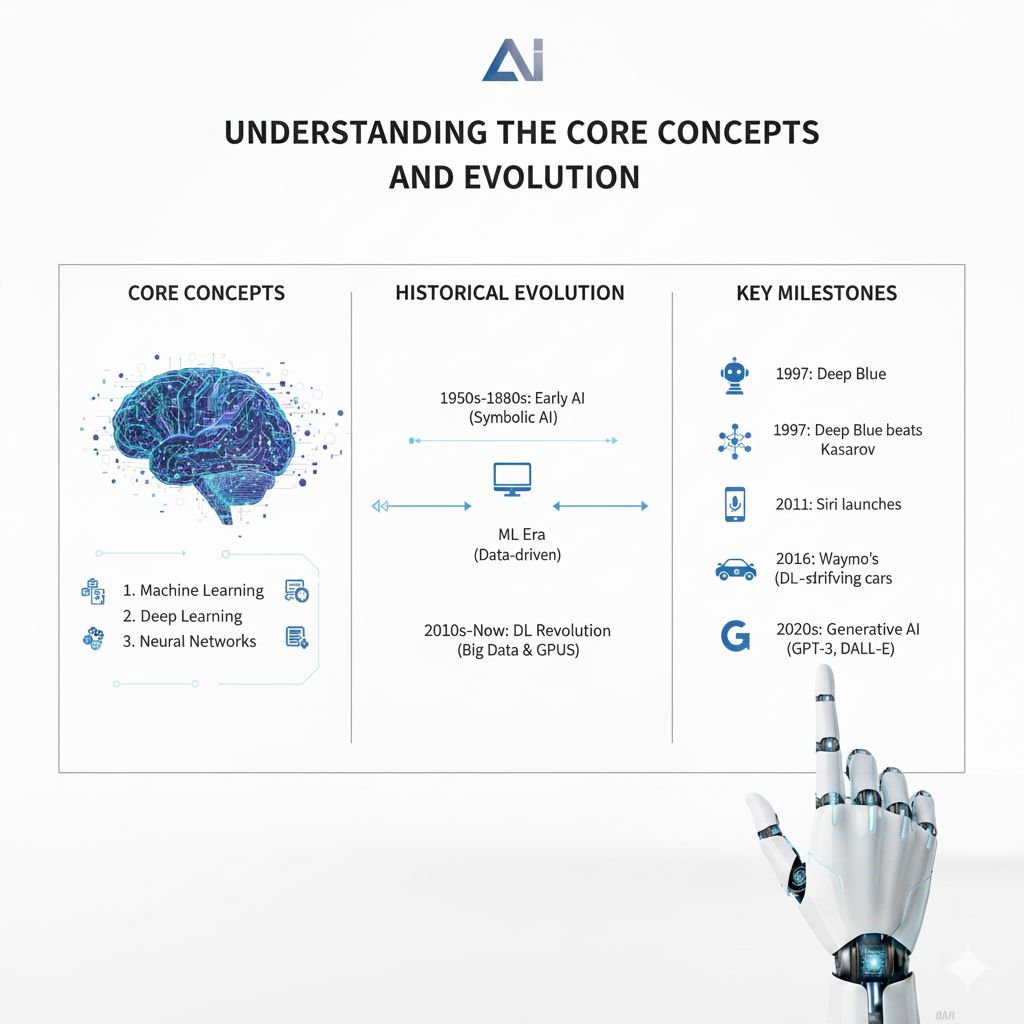

Understanding the Core Concepts and Evolution

The foundation of effective Model Evaluation begins with a clear understanding of what we’re truly assessing and why traditional approaches often fall short. At its core, Model Evaluation represents the systematic process of assessing a machine learning model’s performance, reliability, and suitability for deployment in real-world scenarios. This process must answer three fundamental questions that determine a model’s ultimate value: how well it performs on unseen data, whether it generalizes across different populations and scenarios, and perhaps most importantly, whether it’s truly ready for production deployment.

The distinction between model validation and Model Evaluation has become increasingly crucial in modern practice. While validation typically focuses on parameter tuning and hyperparameter optimization during the development phase, Model Evaluation provides the final, comprehensive assessment of a model’s readiness for real-world deployment. This distinction becomes particularly important as models grow more complex and their potential impact expands across organizations and society. A model might validate perfectly during development yet fail completely in production due to issues with data drift, concept drift, or unforeseen interaction effects.

The evolution of Model Evaluation in 2025 reflects several key trends that have reshaped industry practices. There has been a decisive shift from technical metrics to business impact measurements, recognizing that a model’s ultimate value lies in its ability to drive organizational objectives rather than optimize abstract statistical measures. This shift has been accompanied by an increased focus on ethical AI, where Model Evaluation now includes comprehensive fairness, bias, and ethical impact assessments as standard practice.

Additionally, evaluation is no longer a one-time event but a continuous process integrated with production monitoring systems, ensuring models remain effective as conditions change. Finally, Model Evaluation has become inherently cross-functional, requiring collaboration between technical teams, business stakeholders, and legal/compliance experts to ensure all perspectives are considered.

Part 2: Essential Metrics for Comprehensive Model Evaluation

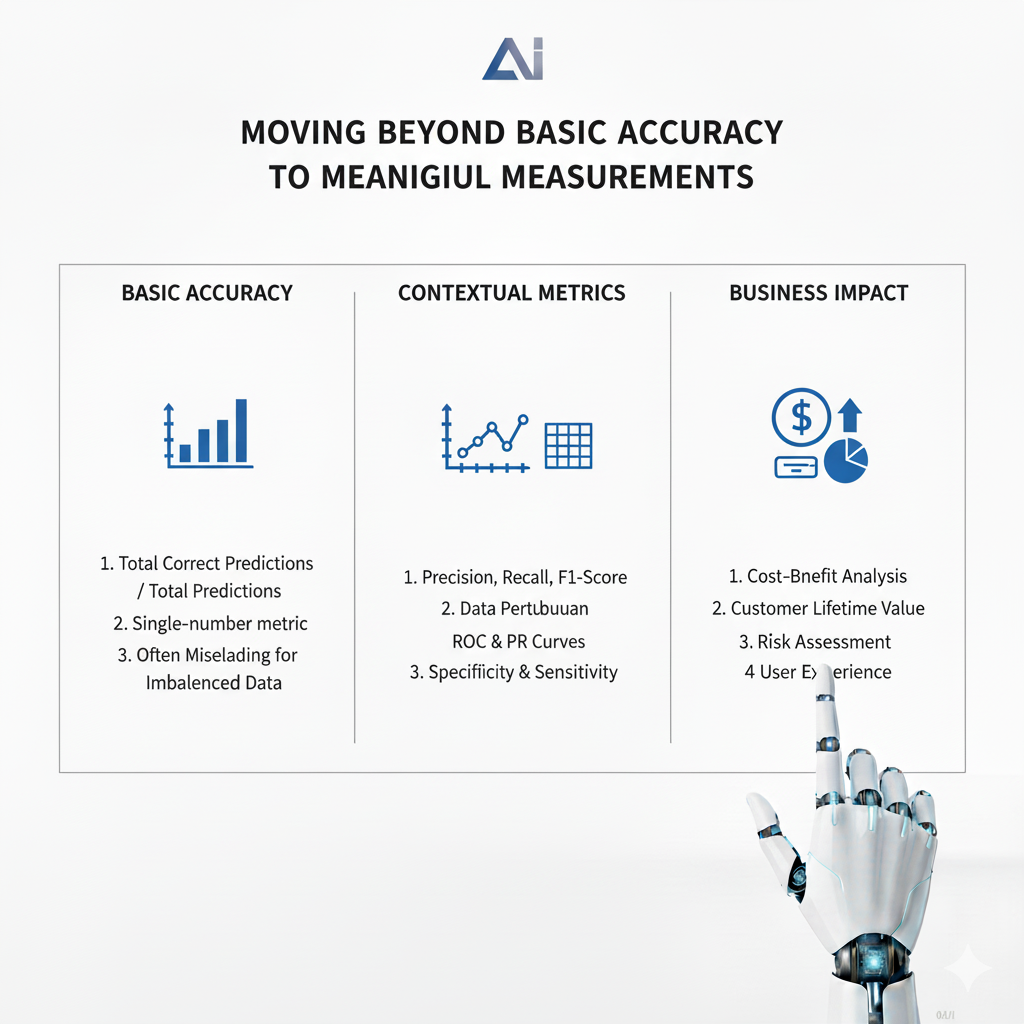

Moving Beyond Basic Accuracy to Meaningful Measurements

The metrics used in Model Evaluation have evolved significantly to address the limitations of traditional approaches while providing deeper insights into model behavior and impact. While accuracy remains the most intuitive metric for classification problems, representing the proportion of correct predictions among all predictions, modern evaluation recognizes that accuracy alone often provides a misleading picture, particularly in imbalanced datasets or scenarios where different types of errors have asymmetric costs.

The evolution of classification metrics has led to widespread adoption of precision, recall, and F1-score as fundamental components of Model Evaluation. Precision measures how many of the predicted positive cases are actually positive, making it crucial for applications where false positives carry significant costs, such as fraud detection or medical diagnosis.

Recall measures how many of the actual positive cases are correctly identified, essential for scenarios where missing positive cases has severe consequences, like cancer screening or security threat detection. The F1-score provides a harmonic mean of precision and recall, offering a single metric that balances both concerns, though modern evaluation typically examines all three metrics across different thresholds to understand the full trade-off space.

For regression problems, Model Evaluation employs a different set of metrics tailored to continuous outcomes. Mean Absolute Error provides a straightforward interpretation of average prediction error magnitude and remains robust to outliers, making it valuable for understanding typical performance.

Mean Squared Error and Root Mean Squared Error penalize larger errors more heavily, making them suitable for applications where large errors are particularly undesirable, such as financial forecasting or safety-critical systems. R-squared and adjusted R-squared measure the proportion of variance explained by the model, with the adjusted version accounting for the number of predictors to prevent overfitting to complex models.

The most significant advancement in Model Evaluation metrics comes from the integration of probabilistic and business-oriented measurements. Probabilistic metrics like Brier Score and Log Loss evaluate the quality of predicted probabilities rather than just class labels, while calibration metrics assess how well predicted probabilities match actual outcomes—a crucial consideration for decision-making under uncertainty.

Simultaneously, business-oriented metrics have emerged that directly measure commercial impact, including expected value frameworks that convert model predictions to monetary value, customer lifetime value impact assessments that measure long-term business value, and cost-sensitive metrics that incorporate asymmetric costs of different error types based on actual business consequences.

Part 3: Beyond Traditional Metrics – The 2025 Model Evaluation Framework

Addressing Fairness, Robustness, and Explainability

The comprehensive Model Evaluation framework of 2025 extends far beyond traditional performance metrics to address critical aspects of model behavior that determine real-world viability. The evaluation of fairness and bias has become an essential component, driven by both ethical imperatives and regulatory requirements.

Understanding algorithmic bias requires examining multiple dimensions, including group fairness that ensures equal outcomes across different demographic groups, individual fairness that guarantees similar individuals receive similar predictions, and counterfactual fairness that ensures predictions remain consistent in hypothetical scenarios where protected attributes change.

The practical implementation of bias assessment in Model Evaluation involves multiple complementary metrics. Demographic parity examines whether prediction rates are equal across different groups, while equalized odds requires that true positive and false positive rates are equivalent across groups. Predictive parity ensures that precision remains consistent, meaning the reliability of positive predictions doesn’t vary unfairly between populations. These statistical measures must be complemented by qualitative assessment and domain expertise, as purely mathematical definitions of fairness can sometimes conflict or prove insufficient for capturing nuanced real-world impacts.

Robustness and stability assessment represents another crucial dimension of modern Model Evaluation. This involves testing model performance under adversarial conditions, including simulated attacks that attempt to manipulate model outputs, input perturbation analysis that measures performance degradation with noisy data, and boundary case evaluation that assesses performance on edge cases and outliers.

Additionally, distribution shift evaluation has become increasingly important, involving covariate shift detection to identify when input distribution changes, concept drift assessment to detect when relationships between inputs and outputs evolve, and temporal stability analysis to evaluate performance consistency over time as world conditions change.

Explainability and interpretability have emerged as non-negotiable requirements for Model Evaluation in most practical applications. Model-agnostic interpretation methods like SHAP (SHapley Additive exPlanations) provide a unified framework for understanding feature contributions across any model type, while LIME (Local Interpretable Model-agnostic Explanations) creates local approximations to explain individual predictions.

Partial dependence plots help visualize relationships between features and predictions, revealing nonlinearities and interaction effects. For specific model types, native interpretation methods include feature importance scores for tree-based models, attention mechanisms for neural networks that reveal focus areas, and rule extraction techniques that derive human-readable rules from complex models, facilitating stakeholder understanding and trust.

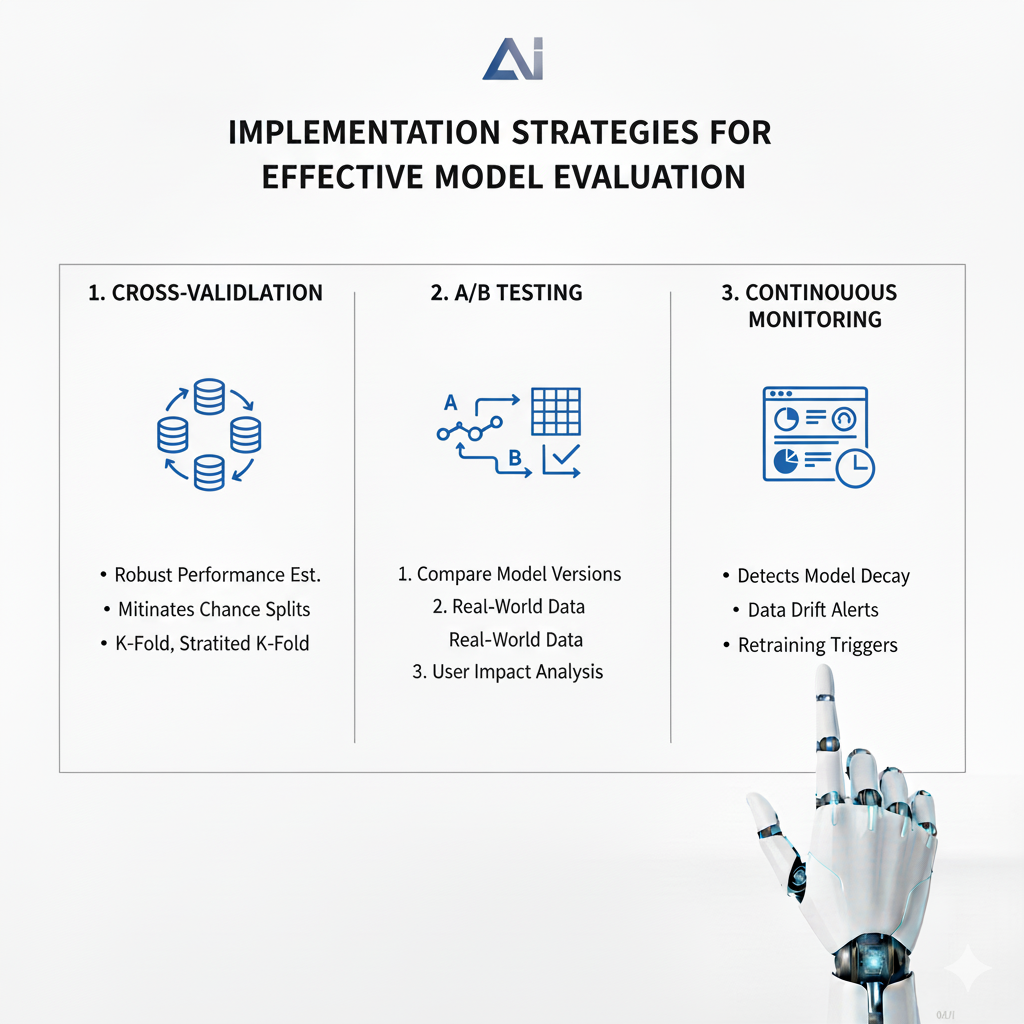

Part 4: Implementation Strategies for Effective Model Evaluation

From Theory to Practice in Real-World Scenarios

The implementation of effective Model Evaluation requires careful consideration of methodological approaches that ensure reliable, generalizable results while accounting for practical constraints. Cross-validation techniques form the backbone of robust evaluation, with k-fold cross-validation serving as the standard approach for most scenarios.

This method involves partitioning data into k folds, using k-1 folds for training and one fold for testing, then rotating through all folds to obtain comprehensive performance estimates. For imbalanced datasets, stratified k-fold cross-validation preserves the percentage of samples for each class across folds, preventing skewed performance estimates.

Temporal data introduces unique challenges for Model Evaluation that require specialized cross-validation approaches. Standard random splitting can create data leakage by allowing models to inadvertently learn from future information.

Time series cross-validation addresses this through forward chaining methods that train on past data and test on future data, expanding window approaches that gradually increase the training set over time, and rolling window techniques that maintain a fixed training window that moves through time. These approaches provide more realistic performance estimates for forecasting and other time-sensitive applications.

Nested cross-validation has emerged as a best practice for Model Evaluation when both model selection and performance estimation are required. This approach uses an outer loop for performance estimation and an inner loop for model selection, preventing optimistic bias that occurs when the same data is used for both purposes.

The implementation involves partitioning data into multiple outer folds, with each outer fold further divided into inner folds for hyperparameter tuning, ensuring that performance estimates reflect true generalization capability rather than overfitting to the validation process.

The strategic splitting of data into training, validation, and test sets remains fundamental to reliable Model Evaluation. Traditional splits using ratios like 60-20-20 work well for moderate-sized datasets, while large datasets might use 98-1-1 splits to maximize training data. For small datasets, specialized strategies like repeated cross-validation or bootstrapping provide more stable estimates.

Temporal splitting requires strict chronological separation, with all training data preceding validation data, which in turn precedes test data. Implementing appropriate gaps between splits helps prevent leakage from near-boundary observations. Stratified splitting maintains the distribution of important variables across splits, particularly crucial for rare classes or subgroups where random splitting might create unrepresentative subsets.

Statistical significance testing provides the mathematical foundation for confident conclusions in Model Evaluation. McNemar’s test offers a computationally efficient method for comparing paired binary classification results, while the Wilcoxon signed-rank test provides a non-parametric approach for comparing model performances across multiple datasets or folds.

For comparing multiple models simultaneously, the Friedman test with Nemenyi post-hoc analysis controls family-wise error rates while identifying specific performance differences. Confidence intervals, whether derived through bootstrap methods or analytical approaches, quantify the uncertainty in performance estimates, helping stakeholders understand the reliability of evaluation results and make informed deployment decisions.

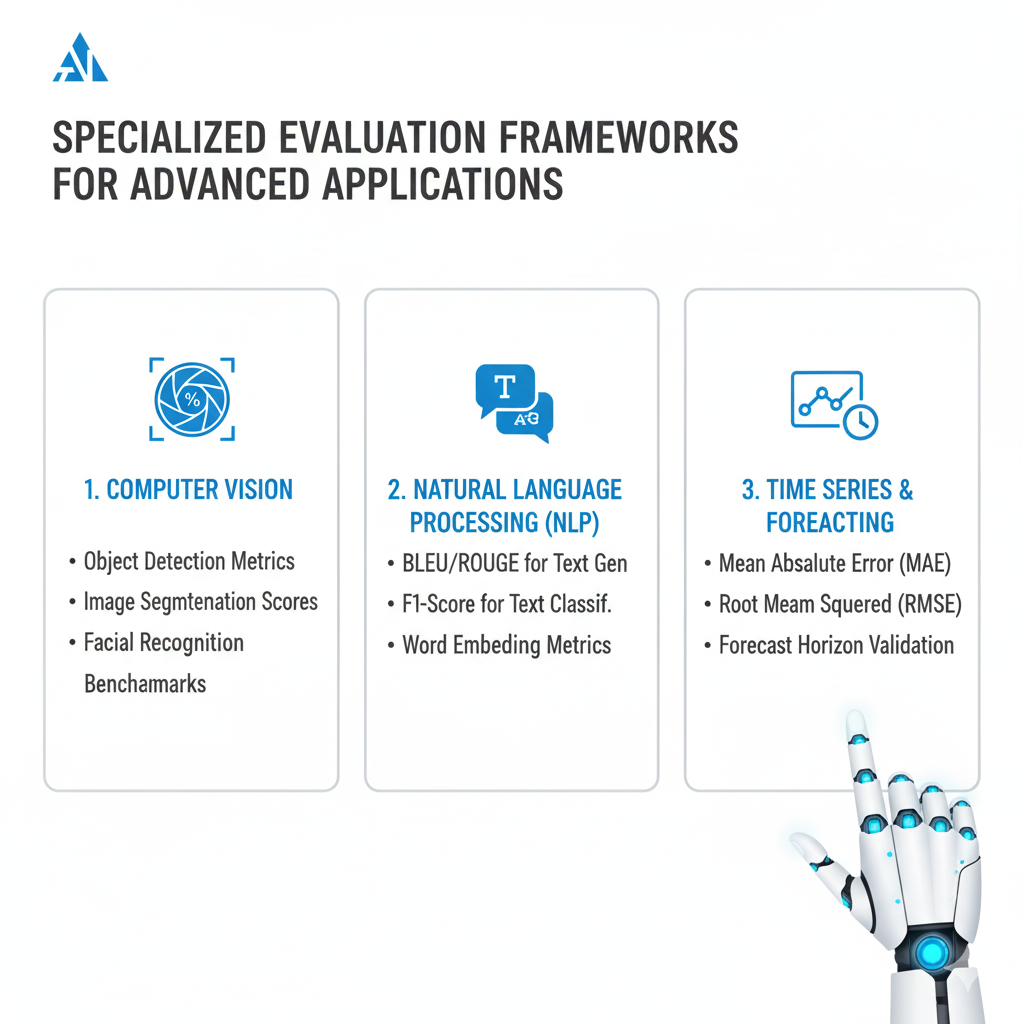

Part 5: Specialized Evaluation Frameworks for Advanced Applications

Tailoring Evaluation to Model Types and Domains

As machine learning applications have diversified, Model Evaluation frameworks have evolved to address the unique characteristics and requirements of different model types and problem domains. Deep learning models, particularly in domains like natural language processing and computer vision, require specialized metrics that capture aspects of performance beyond traditional classification or regression measures.

For language models, perplexity measures how well a probability distribution predicts a sample, with lower values indicating better performance. Text generation tasks employ metrics like BLEU and ROUGE that compare machine-generated text to human references based on n-gram overlap and semantic similarity. Image generation quality is assessed through metrics like Structural Similarity Index that compare generated images to references across multiple dimensions including luminance, contrast, and structure.

The evaluation of deep learning models extends beyond final output metrics to include analysis of training dynamics and internal representations. Loss landscape analysis helps understand optimization challenges and model stability by visualizing the surface that optimization algorithms navigate.

Gradient flow analysis identifies issues like vanishing or exploding gradients that can impede training, while activation pattern analysis examines how information is represented and transformed through network layers, providing insights into what models have learned and potential failure modes. These diagnostic approaches have become increasingly important as model complexity grows, helping developers understand not just if models work but how and why they work.

Unsupervised learning presents unique challenges for Model Evaluation since ground truth labels are typically unavailable. Clustering quality is assessed through metrics like silhouette score, which measures both cohesion (how similar objects are within clusters) and separation (how distinct clusters are from each other).

The Calinski-Harabasz index evaluates clustering quality through the ratio of between-cluster dispersion to within-cluster dispersion, while the Davies-Bouldin index measures average similarity between clusters, with lower values indicating better separation. For anomaly detection, evaluation often focuses on precision at k, which measures the proportion of true anomalies among the top k most anomalous predictions, making it practical for scenarios where investigating all predictions is infeasible.

Reinforcement learning introduces yet another dimension of complexity to Model Evaluation, requiring assessment of learned policies rather than static predictions. The primary metric is cumulative reward, measuring the total reward obtained over episodes, but this must be complemented by measures of sample efficiency that evaluate how quickly policies learn from experience. In real-world applications, safety and robustness evaluation becomes crucial, including measurement of constraint violation frequency, worst-case performance across different environments, and assessment of exploration safety during training. These comprehensive evaluation approaches ensure that reinforcement learning systems can be deployed with confidence in complex, dynamic environments.

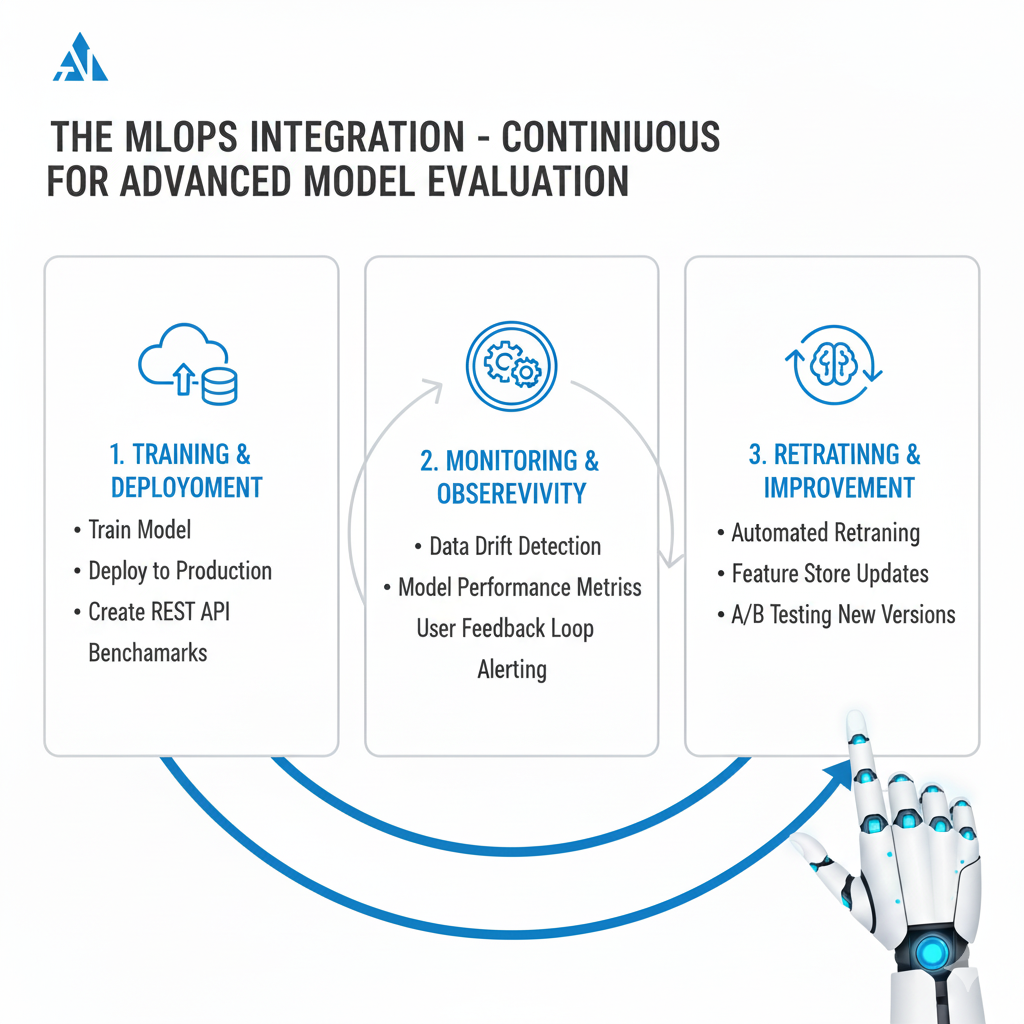

Part 6: The MLOps Integration – Continuous Model Evaluation

Building Sustainable Evaluation Practices

The integration of Model Evaluation into MLOps practices represents one of the most significant advancements in how organizations manage machine learning systems. Automated evaluation pipelines have become essential for scaling ML operations while maintaining quality and reliability. Continuous integration and deployment pipelines for machine learning incorporate automated testing against performance thresholds, regression detection for model updates, and automated rollback mechanisms that trigger when evaluation results indicate potential issues. These automated processes ensure that evaluation occurs consistently across all model changes and deployments, preventing quality degradation as systems evolve.

Evaluation automation extends beyond the initial deployment phase to include scheduled re-evaluation cycles that periodically assess model performance on fresh data, trigger-based evaluation that responds to detected data drift or concept drift, and automated report generation that keeps stakeholders informed about model health and performance. This automation is particularly crucial for organizations deploying numerous models across different business units, ensuring that evaluation practices scale effectively without requiring manual intervention for each model or update.

Monitoring and alerting systems form the operational backbone of continuous Model Evaluation in production environments. Performance monitoring involves real-time tracking of key metrics, statistical process control for detecting metric stability issues, and automated anomaly detection that identifies unusual patterns in model behavior.

Data quality monitoring complements performance tracking by examining feature distribution stability, monitoring missing value rates, and implementing validity checks that ensure input data meets expected standards. Together, these monitoring capabilities provide early warning of potential issues before they significantly impact business operations or decision quality.

Model governance and compliance have become integral components of production Model Evaluation, particularly for organizations operating in regulated industries or handling sensitive data. Audit trails maintain complete model lineage tracking, including data sources, preprocessing steps, training parameters, and evaluation results across model versions.

This comprehensive history supports reproducibility, debugging, and regulatory compliance. Evaluation result history enables tracking of model performance over time, identifying gradual degradation or sudden changes that might indicate emerging issues. For regulated environments, decision logging captures specific predictions and their contexts, supporting explainability requirements and enabling retrospective analysis of model behavior.

Compliance reporting automation has emerged as a critical capability for organizations subject to regulatory requirements around algorithmic fairness, transparency, and accountability. Automated systems generate standardized reports documenting bias and fairness assessments, performance certification for critical applications, and compliance evidence for regulatory submissions. These automated reporting capabilities reduce the overhead of compliance while ensuring consistency and completeness across the model portfolio, enabling organizations to demonstrate responsible AI practices to regulators, customers, and other stakeholders.

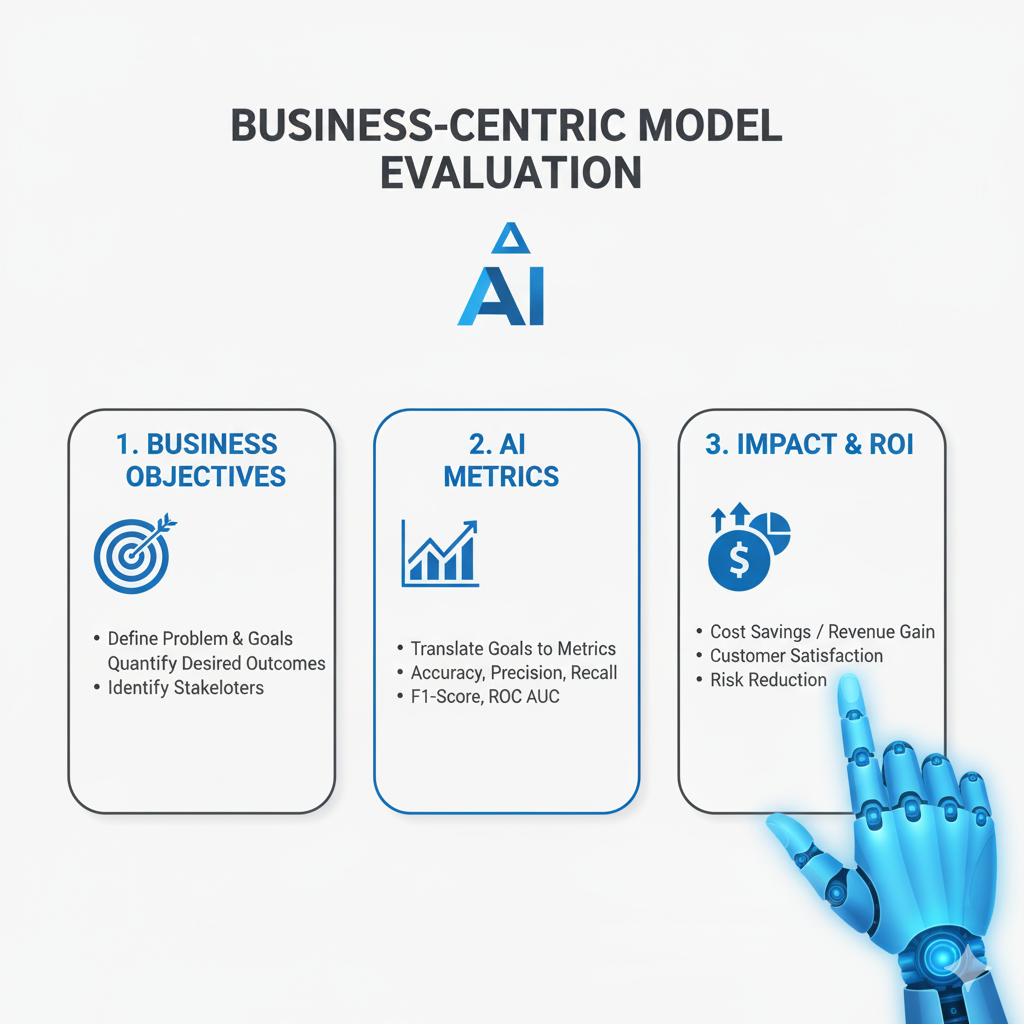

Part 7: Business-Centric Model Evaluation

Connecting Technical Performance to Organizational Value

The ultimate purpose of Model Evaluation is to ensure that machine learning models deliver tangible business value, making the translation between technical metrics and business impact a crucial capability. ROI calculation frameworks have evolved to provide comprehensive assessment of model value, moving beyond simple accuracy comparisons to sophisticated cost-benefit analysis that accounts for implementation costs, operational expenses, and both direct and indirect benefits.

Error cost quantification assigns specific monetary values to different types of model mistakes based on their business consequences, while opportunity cost assessment evaluates the value lost when models fail to identify positive opportunities or make optimal decisions.

This business-oriented approach to Model Evaluation requires deep collaboration between technical teams and business stakeholders to ensure that evaluation criteria reflect true business priorities rather than abstract statistical measures. The development of shared evaluation frameworks that both technical and non-technical stakeholders can understand and trust has become a critical success factor for AI initiatives.

These frameworks typically include customized dashboards and reports that highlight business-relevant metrics while providing drill-down capabilities to technical details when needed for debugging or improvement.

Stakeholder communication represents a particularly challenging aspect of business-centric Model Evaluation. Effective communication requires translating technical concepts and metrics into business language that executives and domain experts can understand and act upon.

This includes creating executive dashboards that highlight business metrics rather than technical details, developing translation frameworks that explain technical performance in terms of business impact, and establishing risk assessment communication protocols that clearly articulate potential downsides and mitigation strategies. The goal is to enable informed decision-making about model deployment and use, ensuring that stakeholders understand both the potential benefits and the limitations of AI systems.

The integration of Model Evaluation with decision-making processes represents the culmination of business-centric assessment. Confidence-based decision making incorporates prediction uncertainty into business processes, enabling better risk management and more nuanced use of model outputs. Risk-adjusted model outputs weight predictions based on their reliability and potential impact, while quality of service level agreements establish clear expectations about model performance and availability.

Human-in-the-loop evaluation assesses the effectiveness of human-AI collaboration, measures appropriate automation levels for different decisions, and evaluates user trust and adoption patterns. These assessments ensure that models are deployed in ways that complement human expertise and organizational processes rather than disrupting them.

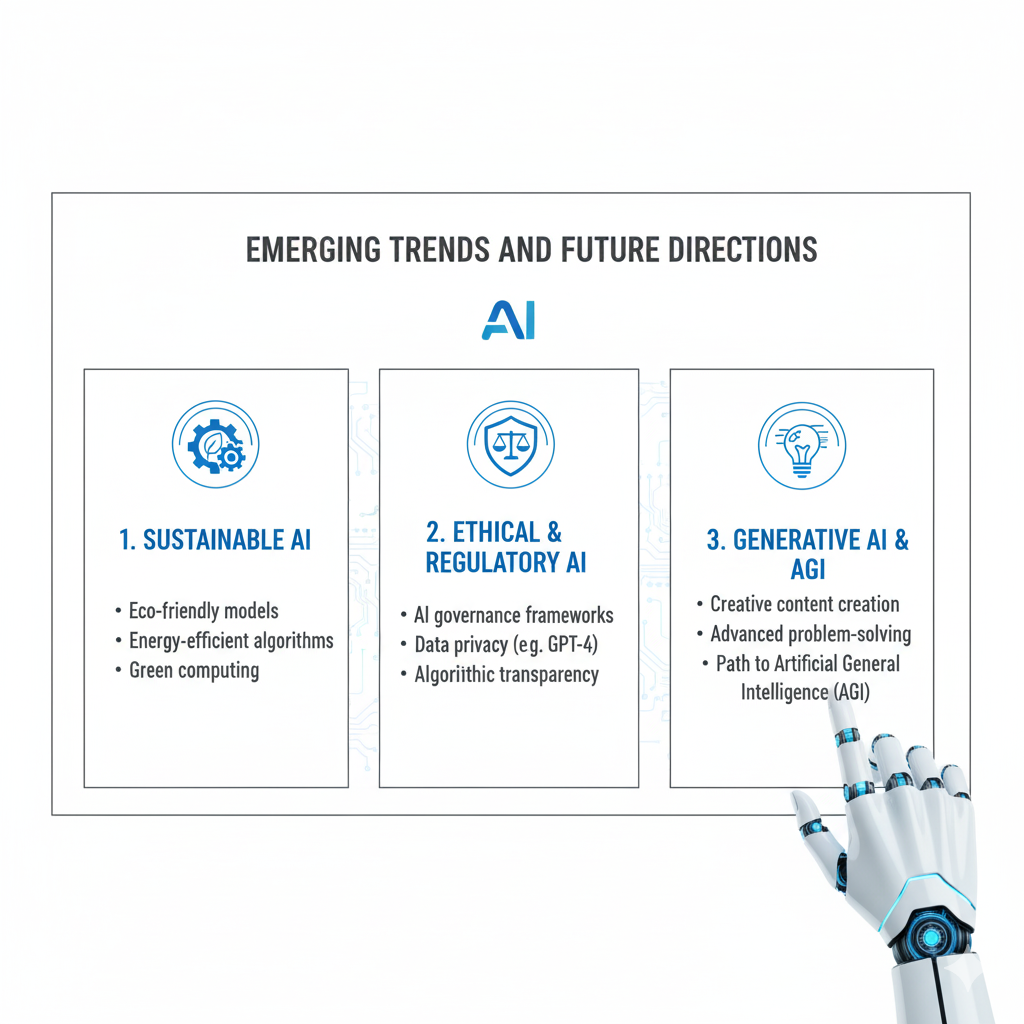

Part 8: Emerging Trends and Future Directions

Preparing for the Next Generation of Model Evaluation

The field of Model Evaluation continues to evolve rapidly, with several emerging trends shaping its future direction. Causal evaluation methods represent a significant advancement beyond correlation-based assessment, aiming to understand not just what models predict but why they make specific predictions and how interventions might affect outcomes.

Counterfactual evaluation frameworks assess how model predictions would change under different hypothetical scenarios, while treatment effect estimation validation ensures that models accurately measure the impact of interventions rather than simply identifying associations. These approaches are particularly valuable for decision support systems where understanding causal relationships is essential for effective action.

Federated learning presents unique challenges for Model Evaluation due to its decentralized nature and privacy constraints. Evaluation in these environments requires privacy-preserving performance assessment techniques that can aggregate results across multiple data sources without exposing sensitive information. Cross-silo evaluation techniques address the challenges of assessing models trained on data distributed across different organizations or business units, while aggregation quality measurement ensures that model combination methods maintain or improve performance compared to centralized approaches. These capabilities are becoming increasingly important as regulatory changes and privacy concerns make centralized data collection more difficult.

Quantum machine learning, though still emerging, requires specialized Model Evaluation approaches that account for the unique characteristics of quantum systems. Quantum model metrics focus on demonstrating quantum advantage over classical approaches, assessing noise resilience in realistic hardware conditions, and evaluating the performance of hybrid quantum-classical algorithms that combine both paradigms. As quantum hardware becomes more accessible, these evaluation methods will become increasingly important for organizations exploring quantum approaches to optimization, simulation, and other challenging problems.

The expanding scope of Model Evaluation now includes broader considerations of ethical AI and societal impact. Long-term impact assessment examines how AI systems affect society over extended periods, including environmental impact measurement that accounts for the computational resources required for training and inference, and economic redistribution effects that analyze how AI systems shift opportunities and resources across different population segments. These assessments help organizations understand and mitigate potential negative consequences while maximizing positive social impact.

Transparency and accountability frameworks have evolved to include explainability certification processes that provide standardized assessment of model interpretability, auditability standards that ensure models can be effectively examined by internal and external reviewers, and responsibility assignment frameworks that clearly delineate roles and accountabilities for model behavior and outcomes. These frameworks help build trust with regulators, customers, and the public while ensuring that organizations can effectively manage the risks associated with AI deployment.

Conclusion: Building a Culture of Comprehensive Model Evaluation

The landscape of Model Evaluation in 2025 represents a mature, comprehensive discipline that goes far beyond simple accuracy measurements. Successful organizations understand that robust Model Evaluation is not just a technical necessity but a strategic imperative that directly impacts business outcomes, regulatory compliance, and ethical responsibility. The evolution from isolated technical assessment to integrated, continuous evaluation reflects the growing recognition that models exist within complex ecosystems and must be evaluated accordingly.

The future of Model Evaluation lies in its deeper integration across the entire AI lifecycle—from initial concept development through production deployment and eventual retirement. As AI systems become more sophisticated and their impact more profound, the role of Model Evaluation in ensuring responsible, effective, and valuable AI implementations will only continue to grow. Emerging challenges including increasing model complexity, evolving regulatory requirements, and growing societal expectations will drive further innovation in evaluation methods and practices.

Organizations that master comprehensive Model Evaluation will enjoy significant competitive advantages through higher ROI from AI initiatives, reduced risk of production failures, stronger regulatory compliance, enhanced customer trust and satisfaction, and more ethical and fair AI systems. These benefits stem not just from individual evaluation techniques but from building a culture that values evidence-based assessment, continuous improvement, and responsible innovation.

The ultimate goal of Model Evaluation in 2025 is not just to measure model performance, but to ensure that AI systems deliver real value while maintaining the highest standards of reliability, fairness, and transparency. This requires moving beyond technical metrics to consider business impact, ethical implications, and operational viability throughout the model lifecycle. By implementing the comprehensive frameworks and methodologies outlined in this guide, organizations can confidently navigate the complex landscape of modern AI and build systems that truly measure what matters—delivering sustainable value while operating responsibly in dynamic real-world environments.

The journey toward comprehensive Model Evaluation requires commitment, resources, and cultural change, but the rewards justify the investment. Organizations that embrace this approach position themselves to harness the full potential of artificial intelligence while managing its risks effectively. In an era where AI capabilities are advancing rapidly and their impact is expanding across all aspects of society, rigorous Model Evaluation provides the foundation for responsible innovation and sustainable competitive advantage.